Contributed by John Bergman-McCool

Hi there! My name is John and I am the new Inventory Specialist at the Robert S. Peabody Institute. As Inventory Specialist my primary task is to work on the ongoing inventory and rehousing project. The project’s goals are to fully understand the collections that are held at the Institute and move them from their old wooden drawers into archival boxes. Armed with the more precise knowledge of what is in the Peabody, the institute can ensure their continued care and share them with students and the public for years to come.

This position is a dream job for me. It brings together my interest in archaeology, museums and collections care, and who doesn’t love spending time underground!

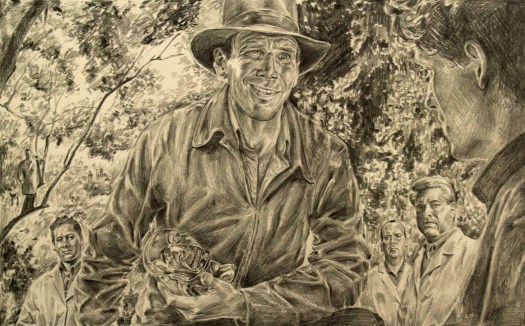

Before moving to Massachusetts in 2013, I worked for almost a decade as an archaeologist in the Pacific Northwest and Arizona. After relocating to New England I enrolled in the MFA program at Tufts and the School of the Museum of Fine Arts. During my time as a graduate student I found that I kept coming back to archaeology and the history of museum collections as a subject for my artwork.

While I was in graduate school I also pursued a certificate in Museum Studies. I gravitated towards collections care and since graduating I’ve worked in collections at the Fitchburg Art Museum and Historic New England.

In a round-about way I’ve come back to archaeology, though it’s been following me for the past 5 years. During my time here I’ve been inventorying objects from Missouri. There have been some surprising finds, which has been great. You never know what you’re going to find here at the Peabody.